Available for Team Plan customers, the tool evaluates AI-generated content and flags potential similarities to known characters, celebrity likenesses, brand logos, and other sensitive references before production.

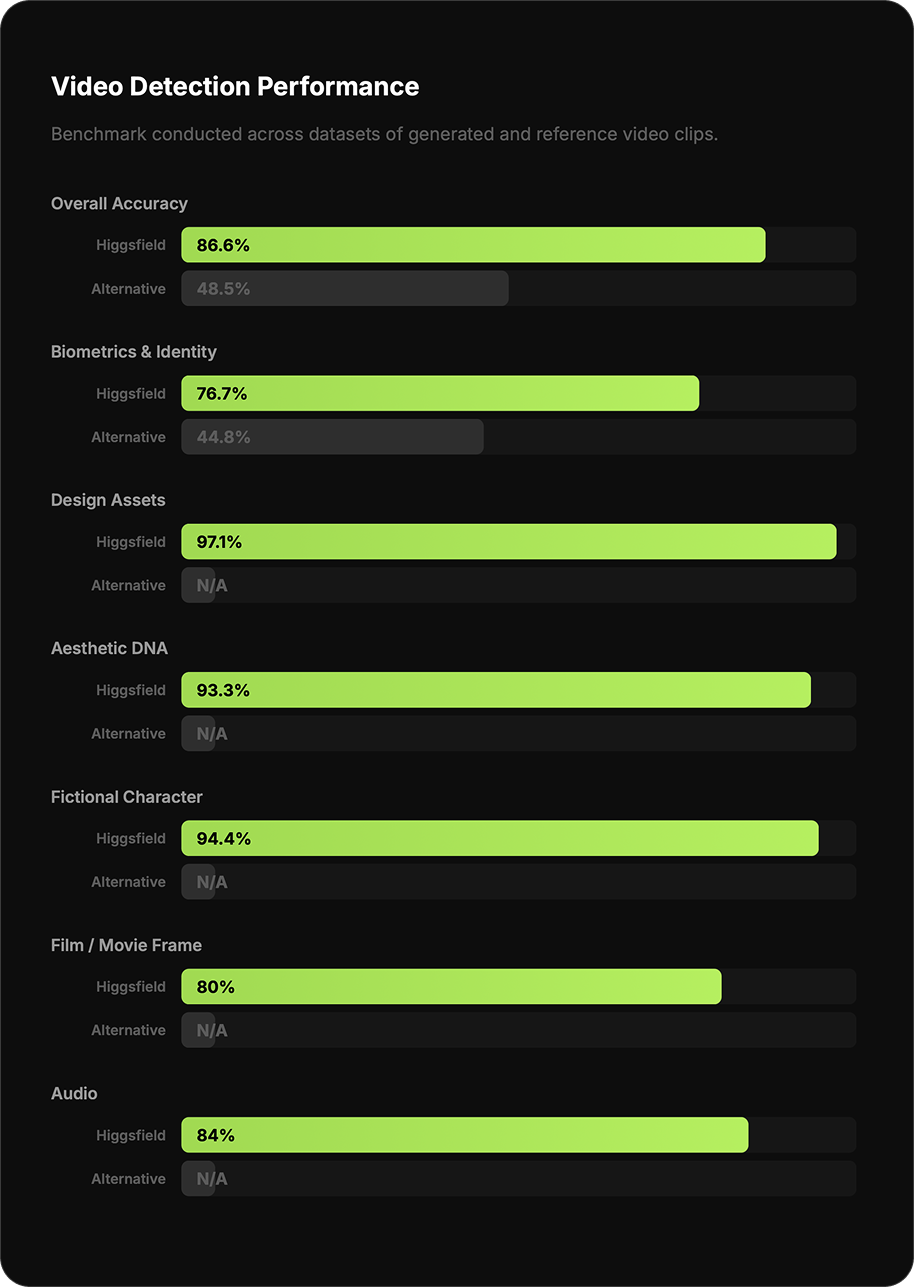

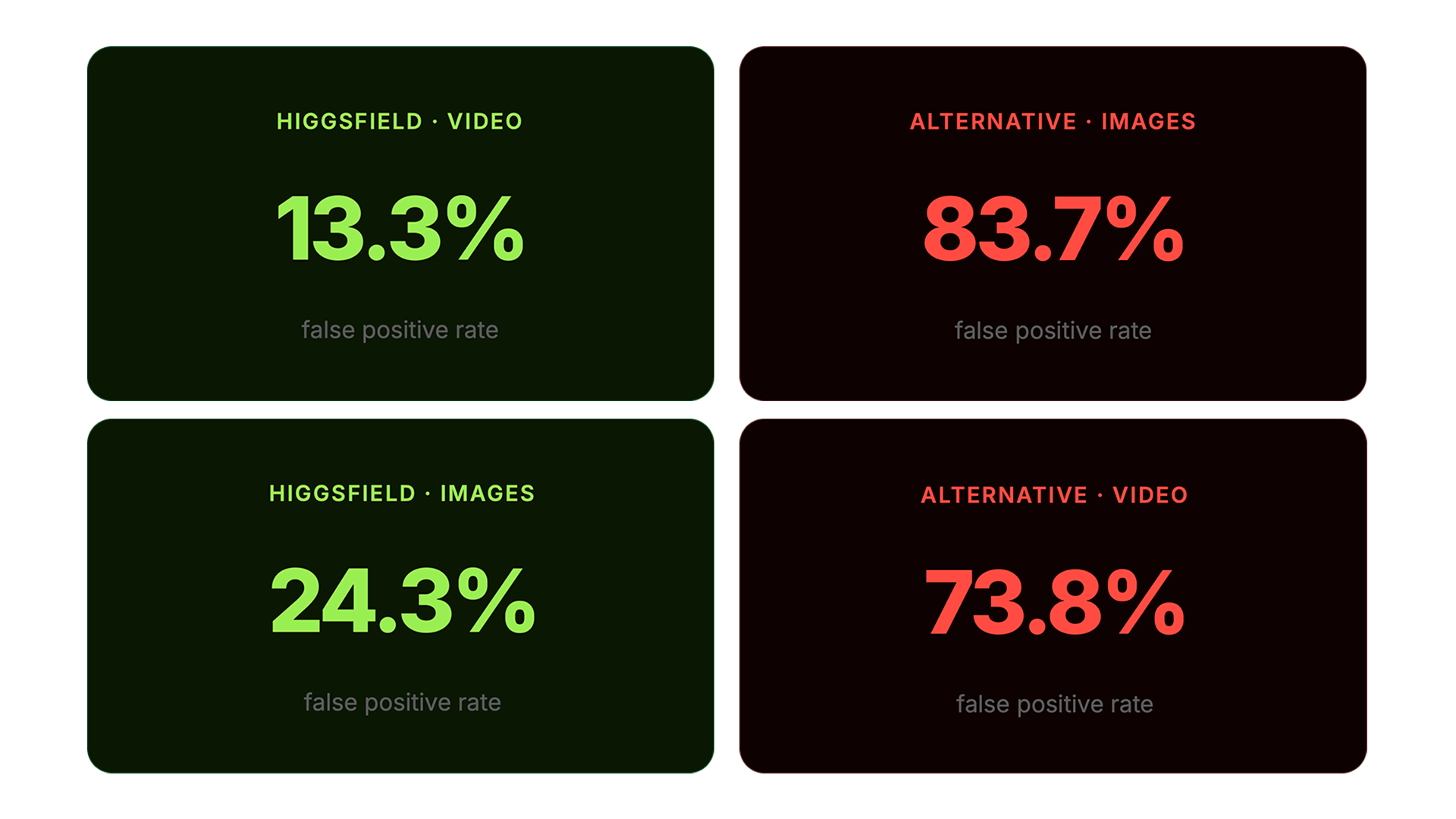

To validate the system's efficacy, the Higgsfield Research Team conducted a benchmark study across a dataset of AI-generated and reference media. In video detection, Higgsfield's model achieved an 86.6% overall accuracy rate compared to 48.5% from a leading third-party alternative. Higgsfield also significantly reduced false positive rates, flagging incorrect similarities in video only 13.3% of the time, compared to 73.8% from the alternative solution.

Why This Matters Now

Higgsfield has grown 100x in the last three months. A prominent share of that usage today comes from production teams regularly running commercial campaigns on the platform. As AI-generated content becomes a core part of professional production pipelines, creators and teams are increasingly expected to consider similarity, likeness, and whether a generated asset may resemble something protected.

By scoring AI-generated assets prior to delivery, production teams can identify potential likeness or copyright overlaps before publishing or submitting materials. This evaluation helps reduce potential review cycles and mitigates the risk of discovering similarity conflicts after a campaign has shipped, when adjustments are costly and complex.

This guide breaks down what the feature does, what it checks, and how to use it as part of a safer production workflow.

What Similarity Scoring Does

Similarity Scoring acts as a guidance system for AI generated media.

Instead of acting as a hard blocker, the system provides creators with deep visibility into how generated outputs may relate to recognizable visual patterns. The system evaluates generated visuals and highlights potential similarity signals that may require attention before publishing.

Type of Similarity

Identifies what kind of match was detected: character, brand, likeness, or style.

Reference Source

Points to the potential source the generated content may resemble.

Location in Media

Pinpoints exactly where in the image or video the similarity occurs.

Similarity Score

A percentage-based score indicating the strength of the detected similarity.

The system does not restrict the final output. Creators remain fully in control of their work. Review the signals and decide whether to adjust.

How Similarity Scoring Works

Similarity Scoring operates as a multi step analysis pipeline that evaluates generated media and compares detected signals with known similarity patterns.

1. Content Analysis

Frames and audio signals are analyzed. Visual and semantic signals are extracted.

2. Pattern Matching

Extracted signals are compared with known similarity patterns. Potential similarities are detected.

3. Similarity Scoring

The system calculates a similarity level and outputs a percentage-based score.

4. Creator Review

The creator evaluates the results and decides to publish or revise.

Detection Categories

The system evaluates multiple categories of visual similarity that commonly appear in generative media.

Category | Description |

|---|---|

Actor Likeness | Visual resemblance to a known actor, including stylistic alterations |

Brand / Trademark | Recognizable brand elements, logos, or trademarked taglines |

Design Assets | Distinct design elements from known properties and franchises |

Fictional Character | Characters resembling known fictional figures from popular franchises |

Film / Movie Frame | Scenes resembling specific film shots or compositions |

Cinematics & Direction | Recognizable cinematic styles or directorial signatures |

Aesthetic DNA | Overall visual style similarity to specific directors or films |

Real Person | Resemblance to real public figures or identifiable individuals |

Biometrics & Identity | Facial or biometric similarity to identifiable persons |

Audio | Music and audio content incorporated into video output |

How the Model Performs

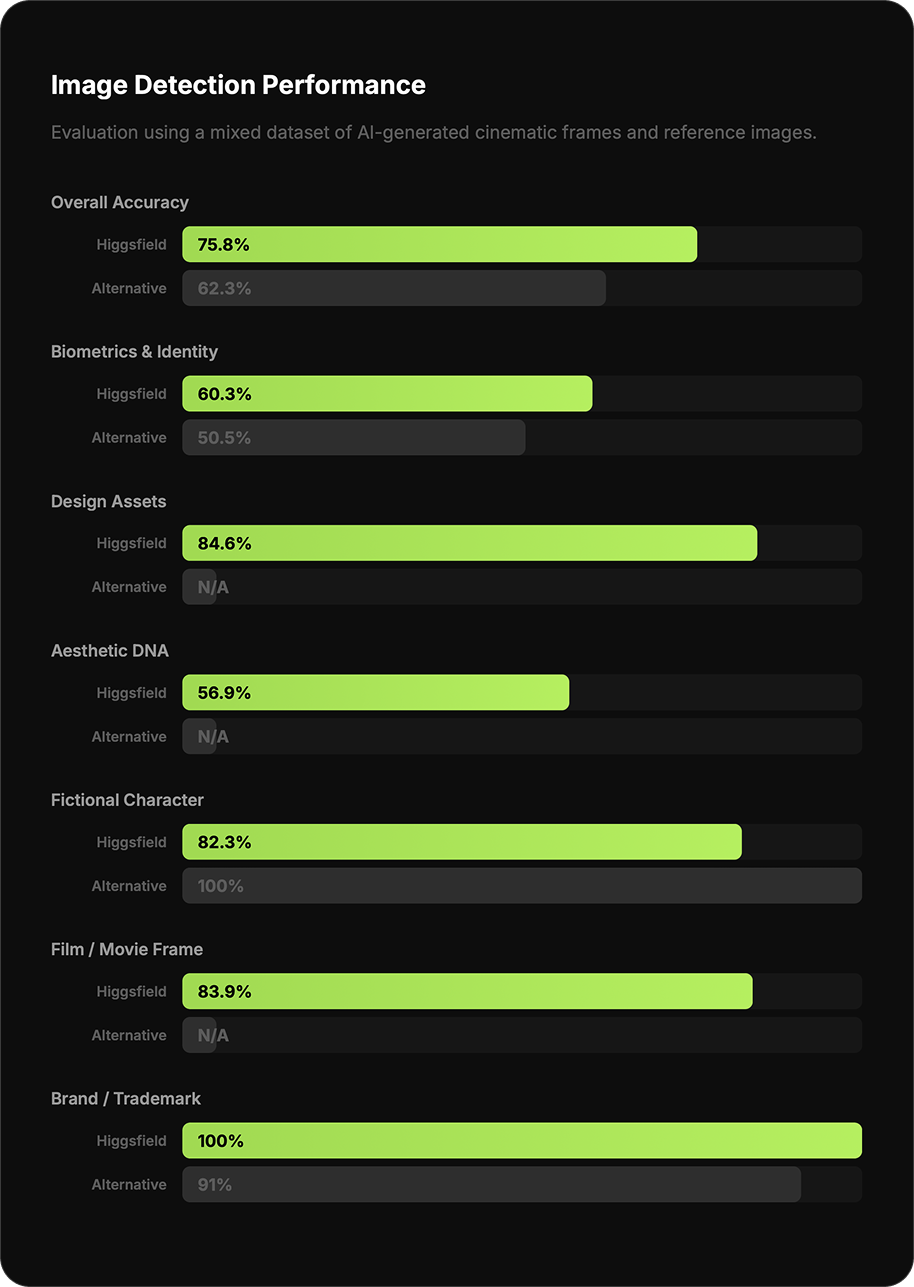

The Higgsfield Research Team conducted an internal benchmark study across image and video datasets, measuring detection accuracy and false positive rates against a leading third-party alternative.

Video Detection Performance

Benchmark conducted across datasets of generated and reference video clips.