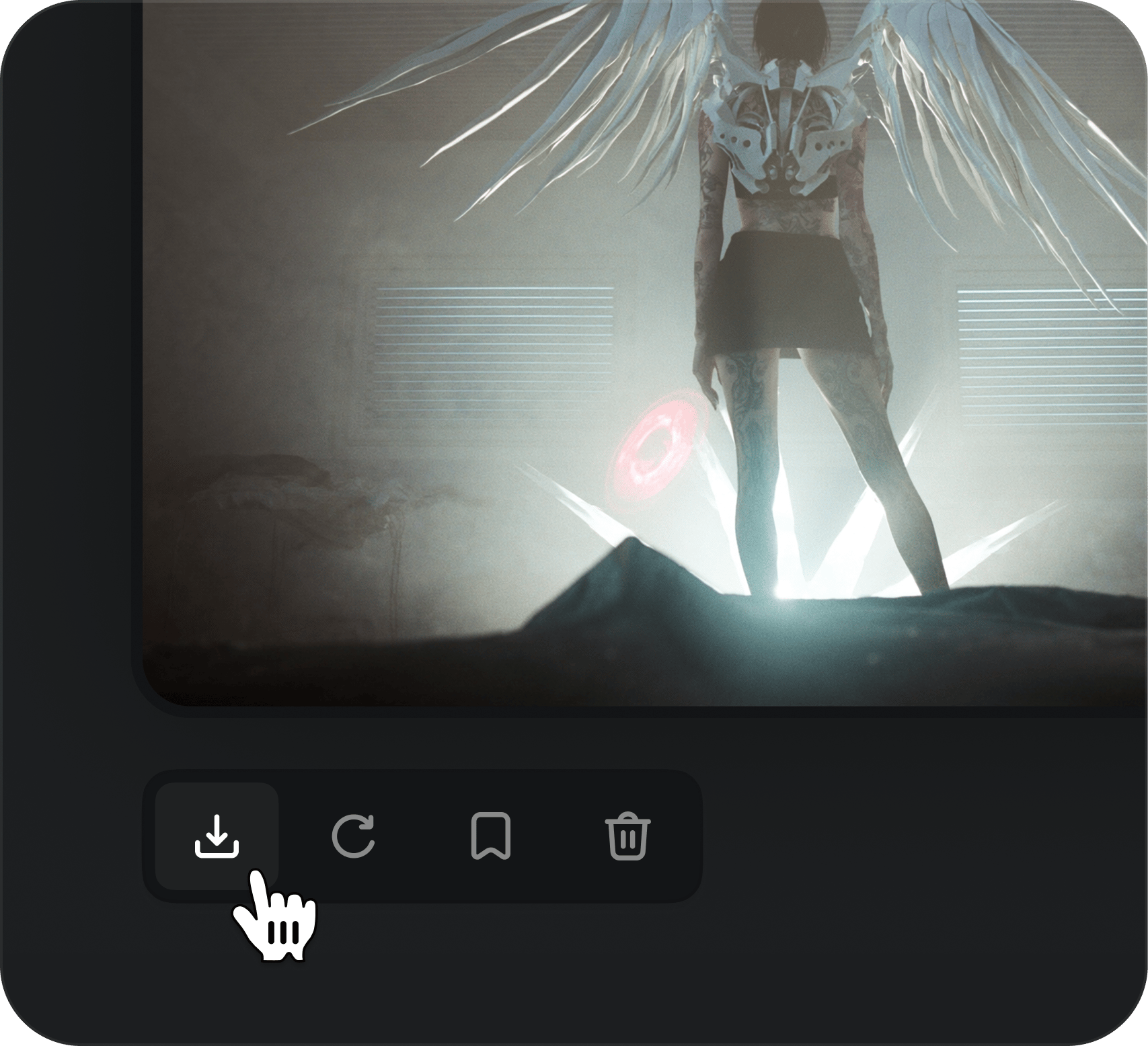

It's gone from a side tool to something I rely on daily

I've been using Higgsfield for a few months now and it honestly changed how I approach projects. The speed is insane, and the quality is more than enough for professional work. It's gone from a side tool to something I rely on daily.